What does does the statement Moore-than-Moore (MtM) mean?

More than Moore" (MtM) is a concept within the semiconductor and microelectronics  industry that addresses technological developments beyond the scope of Moore's Law.

industry that addresses technological developments beyond the scope of Moore's Law.

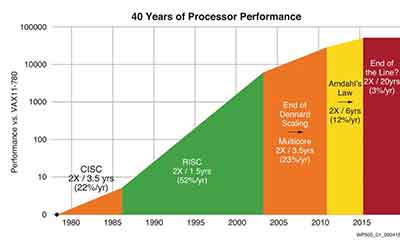

Moore's Law, named after Gordon Moore, co-founder of Fairchild Semiconductor and Intel, observed that the number of transistors on a microchip doubles approximately every two years, which in effect increased performance and decreased cost.

However, as we approach the physical limitations of silicon-based transistors, this trend becomes increasingly challenging to sustain. Therefore, "More than Moore" was conceived as a way to continue the evolution of electronics and create more value in devices, not merely through miniaturization and performance enhancement, but by integrating different functionalities like sensors, actuators, and power management into the system.

So, More than Moore doesn't just aim to improve processing power as per Moore's Law. It integrates non-digital functionalities into systems, which allows a more efficient, diversified, and powerful device ecosystem. The development of system-on-a-chip (SoC) and system-in-package (SiP) technologies are examples of this trend.

What Threatens Moore's Law?

What threatens Moore's law? The question is not whether it will end or not. It is about whether it will continue to be applied. A few years ago, Intel co-founder Gordon N. Moore predicted that semiconductors would double in size every two years. This doubling rate is a compound annual growth rate of 41%. Although there is little empirical evidence to back his prediction, this has held true ever since. And while 3-D semiconductors and multicore processors are still a few years off, it isn't yet at that point. So what threatens the continuation of Moore's law?

The question of what threatens Moore's Law isn't a simple one. It is actually quite complicated. The most important question to ask is, "What's causing this change?" It's important to understand why. The answer depends on the type of application. If it is simply "AI" (artificial intelligence), then it's impossible to avoid this issue. However, many researchers predict that AI applications will be much more expensive in the coming decades than they are today.

The number of transistors in an integrated circuit has doubled every 18 months to two years, according to Eroom's law. In 1968, Moore and Robert Noyce co-founded Intel. This law was a driving force behind the success of Intel's semiconductor chip. Though the law has lasted for more than five decades, it does face challenges. In this article, we'll look at some of the most important factors.

The atomic nature of materials and speed of light are the biggest threats to the future of computing. Nevertheless, if this law is not broken, the industry will remain in an incredible state of technological progress. Fortunately, Moore's law is still alive, but it may be a few more years away. It's still the driving force behind many breakthroughs, and it's a fundamental fact of the modern world.

Besides a radically different industry, the law's limitations are not fully understood. The current technology used in computing is so sophisticated that it is impossible for the transistors to operate in smaller circuits. If transistors don't continue to shrink, the heat generated by these chips will also increase and computers will become less efficient. Hence, these companies must consider a cooling solution. It is imperative to implement new technologies.

Is Moore's Law still valid in 2022?

In the last 20 years, computer chips have become so similar to each other that they could no longer perform the same tasks. While this is still a good thing, there are many downsides to the technology. If we don't have cheap chips, we will be unable to develop smarter, faster and more expensive devices. This, in turn, might hamper our ability to innovate and create new products. This is where specialized chips come into play.

As chips become smaller, they will be impossible to manufacture indefinitely. The shrinking chips will eventually run into unyielding laws of nature. Assuming that the pathways of a chip are shorter than the length of a molecule, the limits will be exceeded. Aside from this, a single molecule is just too short for a single transistor to operate efficiently. This means that a microchip with a specialized design is a disaster.

The lack of specialized chips may be the greatest threat to Moore's Law. While the lack of a specialized chip will not cause problems for the law, it will limit the growth of computing power. This is a good reason to invest in specialized hardware. And it's not just a matter of money. It's also an issue of environmental impact. The problem isn't a fundamental flaw in the theory.

Even when Moore's Law isn't being followed, specialized chips could be the key to technological advancement. While a specialized chip might be a good thing, the technology that drives the computer's performance might not be needed. And, the future isn't over yet. And the stifling a standardized chip is a bad thing, but it's not a good thing.

Things You Didn't Know About Moore's Law

You've probably heard of Moore's law, but did you know that its meaning is somewhat more complex? Intel co-founder Gordon Moore observed the doubling of transistors in integrated circuits every two years. While he didn't call this observation Moore's Law, he did make it as a prediction based on emerging trends in chip manufacturing. Over time, this insight became the golden rule that we all know today.

The idea behind Moore's Law dates back to 1965, when he published a paper on the exponential growth of computing power. At the time, chips had only 60 transistors, but that number had doubled in the next 24 months. Today, the latest Intel chip, the Itanium, has 1.7 billion silicon transistors. The idea is so fundamental that it has changed how we do everything.

It was published in Electronics magazine forty years ago and was originally titled "Cramming more components on to integrated circuits". Today, the number of transistors on a microprocessor can exceed 1.7 billion. Although Moore's Law predicts exponential growth, he did not expect microprocessor clock speeds to double every 18 months, disk drives, and the Internet. As a result, the law has had mixed results and is not definitive.

As transistors get smaller, the energy consumed by manufacturing them increases. The number of transistors in Intel's current tick equals the surface area of two common logic cells. Intel sees a path for continued Moore's Law growth through the next decade. Its chief architect, Alan Gara, is working to build a supercomputer for Argonne National Laboratory near Chicago, and said that it expects its chip to have transistors smaller than seven nanometers, the size of a human hair.

Although Moore's Law continues to be widely quoted, there have been several historical errors associated with the term. Cringely combined two historical errors: doubling transistor density only doubles every 18 months, and Moore was not the first to make this discovery. Instead, he simply modified the original observation to indicate the doubling time as twenty months. These omissions resulted in the widespread misinterpretation of Moore's law.

In 1965, Intel co-founder Gordon Moore wrote an article for Electronics Magazine. In it, Moore outlined his idea that the number of transistors on an integrated circuit would double every two years. The tech industry quickly adopted this idea, and the world of computers has been changing thanks to Moore's Law. If you're looking to buy a new computer, Moore's Law could be right for you.

While some versions of Moore's Law make technical details easier to understand, they don't explain the underlying science behind the doubling of transistors in a year. Most versions focus on economic benefits of a technological innovation. For example, the number of transistors on a silicon chip may be uninteresting to the average person, but the increase in transistors is a good example of how Moore's Law works. As long as the technological changes are valuable to us, economic considerations are the best measure of progress.

Another example of Moore's Law's impact on the world economy is the fact that the average size of a silicon atom in a semiconductor chip has decreased from five nanometers in 1954 to one atom in 2004. Today's chips may contain transistors as small as three nanometers in size. The exponential rate of technological advancements attributed to Moore's Law is a great reason for the growth in the information technology industry.

A good way to test Moore's Law is by charting the number of transistors on microprocessor chips. As a rule, the transistor count in microprocessors doubles about every 18 months. You can find these numbers by downloading the Microprocessor Quick Reference Guide by Intel. The results show that Moore's Law is true and continues to benefit the world. However, it also explains why chips can melt.

Is Moore's Law Still True?

Moore's Law, originally stated by Gordon Moore in 1965, predicted that the number of transistors on a microchip would double approximately every two years, leading to exponential growth in computing power. For decades, this trend held, but in recent years, the industry has encountered significant physical and economic challenges.

Is Moore's Law Still Holding?

- Physical Limitations: As transistors shrink to the atomic scale (below 5 nm), quantum effects such as electron tunneling make further miniaturization increasingly difficult.

- Economic Factors: The cost of advanced semiconductor fabrication has skyrocketed, making it less economically viable for companies to maintain the same pace of transistor scaling.

- Shift in Innovation: Instead of just transistor scaling, the industry is focusing on new architectural innovations, such as:

- Chiplet architectures (e.g., AMD’s Ryzen and Intel’s Meteor Lake)

- 3D stacking (e.g., Intel’s Foveros and TSMC’s CoWoS)

- New materials (e.g., graphene, carbon nanotubes)

- Alternative computing paradigms (e.g., quantum computing, neuromorphic chips)

What’s Next?

Moore's Law in its traditional sense is slowing, but computational power is still improving through alternative means, such as parallel computing, AI-driven chip design, and specialized processors like GPUs and TPUs.

Would you like a more detailed look at any of these alternatives?

What is The Future of Moore's Law?

Moore's Law, which dictates the increase of computing power with an exponential growth, is expected to continue for the foreseeable future. However, this rapid doubling of computing power may not be sustainable, as it will eventually reach the physical limits of microelectronics. As such, some observers have speculated about the end of Moore's Law. Others, however, believe that Moore's Law will continue for at least a few more generations. In fact, the International Technology Roadmap for Semiconductors, the law is scheduled to continue until 2016, when the next doubling of technology is expected to reach a plateau.

Computer processors double every two years

When computing chips double in size, the first qualitative revision to Moore's Law occurs. This change alters the timing of the doubling so that it happens every 18 months instead of every two years. The new doubling time is still 18 months, but it translates to two billion times the amount of processing power. Moore's law also suggests that computer processors could double in complexity in less than two years.

The idea of doubling the size of a computer chip was first proposed by Gordon Moore, a co-founder of Intel and Fairchild Semiconductor. He predicted that by the end of the decade, transistors would double in size. Moore's initial prediction was not correct, but he revised it to double the size every two years. By the end of the decade, computers would have two billion transistors and could be as small as a quarter of a teaspoon of sugar. Today, computer processors have become a powerful tool for tackling a wide range of tasks.

Although the doubling speed of computer processors has been accelerated by the rise of 3D architectures and 3D transistors, researchers are still unsure of whether Moore's Law will remain constant. But they're confident that it can keep up with the doubling pace of the economy for at least another decade. A new chip with 1.6 trillion transistors in ten years will be 3200% faster than the one with 50 billion transistors today.

However, the technology behind Moore's Law could be at an end soon. The original prediction of Moore's Law was that the number of transistors on an IC would double every two years, but the reality of transistors has been far less impressive than Moore's initial prediction. Moore's law has become a landmark in the development of modern technology. However, it could be a myth soon. The future of technology depends on it.

Despite Moore's law, computer processors are now twice as fast as they were in the 70s. Despite the increased complexity and speed, the number of transistors on a modern CPU has barely doubled in the past decade. And it's worth remembering that processors double in size because of the creation of new manufacturing processes. If we were to believe that Moore's law still applies to our modern technological landscape, we'd probably be seeing Lewis Hamilton and the Formula One drivers traveling 11,000 mph at Silverstone!

Falling component costs

During the early 1990s, the emergence of semiconductor technologies, such as the x86 processor, was one of the most exciting technological developments ever. Previously, it was very difficult to make large-scale chips, but now, the cost of components has dramatically decreased, thanks to Moore's Law. In 1965, he published a paper, "Intel's Double-Helix Processor and Its Impact on the Cost of Microprocessors", that explained how chip production was able to make increasingly complex chips at a lower price.

The microprocessor is the brain of a computer, and according to Moore's Law, the cost of this component is expected to fall dramatically within 18 months. This means that, by that time, computer chips with the same speed and power should cost half as much as they do today. Other non-chip-based technologies also advance rapidly. For example, disk drive storage doubles approximately every nine months, while equipment for fast transmissions over fiber-optic lines doubles every twelve months. The price/performance curve is continuing to advance exponentially.

Despite the rapid rise of technology, the cost of transistors is a prime culprit in this problem. Unlike in previous decades, transistors have become cheaper over time. In fact, a recent report concluded that semiconductors are now cheaper than ever. That means that chip manufacturing costs have fallen dramatically. But the cost of component production is not the only problem with Moore's Law. A more fundamental reason is the falling cost of production.

Moore's Law predicts that transistors will double in size every two years. This has helped make a great impact on the price of semiconductors, as well. However, it should not be confused with "minimum transistors," which are the lowest-cost devices. Instead, they should be a major source of cost savings. Moore's Law is the result of Moore's observation. It has helped to drive the cost of electronic components down by more than three hundred percent.

Despite the fact that the cost of transistors has decreased over the past four decades, the underlying theory of Moore's Law still holds. The idea that transistors double in size every two years is based on the implicit assumption that consumer demand will continue to grow in tandem with production. Without consumer demand, Moore's Law would have failed. The marginal cost of chips would have become so high that the market would no longer sustain its growth. Despite the difficulties associated with predicting such a trend, it has been true ever since.

Increase in computing power

The doubling of computing power every two years is a great thing, but there's a dark side. While transistors have gotten smaller, the power they consume has been steadily increasing. That means that the power density of a chip is increasing rapidly as well. This has presented a problem for architects, who have had to figure out how to manage power. The good news is that Moore's law is here to stay.

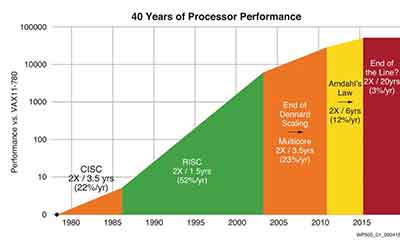

In the 1980s, the processing power of a computer was typically expressed in terms of its memory's cycle time. Vax MIPS and MFLOPs became the standard benchmarks for integer and floating point operations. In the 1980s, standard test programs were introduced to measure processing speed. These benchmarks were intentionally designed to be incompatible with earlier benchmarks. Moore's law will benefit from an increase in computing power.

In 1965, Gordon Moore, the co-founder of Intel, formulated an article in the Electronics journal predicting that the number of transistors in a computer chip would double every two years. It turned out that he was right: the number of transistors on an integrated circuit would double every two years. The predictions made by Moore's law have been largely realized, and we can expect to see this happen again in the coming years. A major positive side to this trend is that the cost of components will continue to decline. This will allow us to create better computers that can do more.

While it's true that Moore's law has many extensions, it's not the only thing we should be thinking about. It applies to several aspects of digital technology, such as chip size, density, and speed. The same applies to software and hardware. A higher computing power increases efficiency and decreases cost. However, it's important to understand the limitations of Moore's law. There are a few factors that you should be aware of.

When Moore's law is implemented, computer prices will fall. In the early 1990s, computers cost around 20-30 percent less. This trend has continued since then, and the price per component will halve every 45-32 months or 18 months. Today, we have no hard evidence to back up Watson's predictions, but it's an interesting theory to consider. The next time you're wondering whether to buy a new computer, consider Moore's Law.

Impact on high-tech society

One of the many benefits of Moore's law is the reduction in cost per component. By making computing devices smaller, Moore's law has made them affordable for the general public. This development has also facilitated the development of consumer electronics and changed many social customs. In January 2008, the world's network of computers included 541,677,360 host computers. It is not surprising, then, that the average cost of cell phones has fallen to around $25 or less.

Another benefit of Moore's Law is that it has become self-fulfilling. It has inspired breakthroughs in miniaturization and design. The resulting computing power has multiplied exponentially. This trend continues to this day, despite the skeptics' predictions. As companies and engineers realized that Moore's Law was here to stay, they continued to push the technology forward. With each new development, Moore's Law inspired engineers to continue the advancement of computing power.

The accelerating pace of technology development is another benefit of Moore's Law. Computers become more advanced than ever, and the number of transistors continues to double every 18 months. This phenomenon has benefited everyone from the average person to multinational corporations. The exponential growth in computing power has revolutionized how we live, work, and play. But how does this impact our everyday lives? How do we measure the growth of technology?

The most obvious consequence of Moore's Law is that technology will no longer be as cheap as it was before. By 2020, computers will reach their limits, because transistors can't function in smaller circuits at higher temperatures. To cool down the transistors, more energy is consumed than is transferred through them. As a result, there will be a huge shift in technology. It is estimated that this change will be felt in our everyday lives.

The computer industry has been undergoing transformation due to the advancements in computer technology. Computers have changed the way we operate and how we live in our society. They are everywhere, from RFID tags used to track luggage to billions of mobile phones. The impact of silicon on our lives is staggering - from next generation Legos to table lamps that change color with the stock market. Computing and high-tech society are becoming increasingly interconnected, with a new wave of possibilities in the near future.

Video: Future of Moore's Law

Is the Number of Transistors on a Compute Chip Still Doubling Every 2 Years?

During the 1950s and 1960s, a theoretical law called Moore's Law was posited,  predicting the doubling of the number of transistors on a compute chips every two years. This theory was known by many scientists working in the field and it was subsequently adopted as Moore's Law. The law has led to dramatic advancements in computing and has helped push technology forward over many challenges. It is still valid today, but there are some potential limits to its use.

predicting the doubling of the number of transistors on a compute chips every two years. This theory was known by many scientists working in the field and it was subsequently adopted as Moore's Law. The law has led to dramatic advancements in computing and has helped push technology forward over many challenges. It is still valid today, but there are some potential limits to its use.

The newest fabrication plant at Intel had been designed to build chips with features as small as 10 nanometers. Despite these limitations, the number of transistors on a chip is still doubling every two years, but the rate is decreasing. This trend is not sustainable - the cost of producing chips is rising and the atomic nature of semiconductors poses a challenge.

While Moore's law is widely accepted, the reality has been more erratic. While the law has held up for many years, some studies have revealed historical errors and data that contradict the provided version of Moore's Law. This makes it easy to spot the discordance between the law and reality. For example, if Moore's law predicts transistor doubling every 18 months, then 1.4 billion transistors will be missing in the year 2000. But if we change the doubling time to two years, then the discrepancy is much less severe.

Although Moore's Law still holds true, the future may not be so optimistic. While the number of transistors on a compute chip has doubled every two years, that is no longer the case. It has been twice as slow as Moore's original prediction. It is likely to change in the next couple of years, however. If this is not the case, Moore's Law could be outdated before 2020.

How Will CPUs Continue to Get Better After We Can't Shrink Transistors?

You might be wondering how will CPUs continue to get better after we've hit the limit on shrinking  transistors. First, it is important to note that 10nm and 7nm are marketing terms. These numbers refer to the density of transistors, which is a measure of how much stuff you can fit onto one chip. The smaller the transistors, the more stuff you can fit on one chip. Obviously, this results in more chips per wafer.

transistors. First, it is important to note that 10nm and 7nm are marketing terms. These numbers refer to the density of transistors, which is a measure of how much stuff you can fit onto one chip. The smaller the transistors, the more stuff you can fit on one chip. Obviously, this results in more chips per wafer.

Moore's Law

If you're wondering how chips keep getting smaller and faster, you've probably heard of Moore's Law. This theory of doubling the number of transistors in a chip every 18 months explains how chips get smaller while increasing their power efficiency. But does Moore's Law still apply today? The answer is yes. It's still relevant, although the number of transistors has decreased over the past two decades.

When did Moore's Law begin to have an impact on the technology industry? It directly affected the evolution of computing power. As transistors in integrated circuits got smaller, the process of conducting electricity became faster. The faster the transistor, the faster the integrated circuit will run. The process of fabrication also increased dramatically, meaning the price of CPUs rose. So, the process continues to evolve, but at a slower rate.

However, despite the success of the theory, it has become increasingly difficult to continue the downward trend in transistor density. While it's still possible to increase the number of transistors in a chip, the process is becoming more difficult and expensive. Luckily, computing companies found ways to make use of these extra transistors, allowing CPUs to get smaller while improving their performance.

While it may seem like a pipe dream, it's true that transistor density is essentially limited by the size of the transistor gate. But as transistors get smaller, the size of the gate shrinks. With the help of new transistor designs, transistor density will continue to increase as well as improve. In fact, nanowires and layer cakes of processor elements will quadruple transistor density.

Moore's Law was first published in 1965. Gordon Moore co-founded Intel in 1968, and together with Robert Noyce, it was the driving force behind the success of Intel's semiconductor chip. The law is still alive today, though it is a little hard to see how things will go in the near future. However, we can't stop the progress, so it's important to remember that there are limitations to Moore's law.

Problems with Moore's Law

The first problem with Moore's Law and CPUs continuing their exponential growth is the physical limitations of shrinking transistors. Though the cost of transistors has been decreasing slightly generation over generation, diseconomies of scale are weighing them down. The latest chips are made of billions of transistors, which are almost invisible to the human eye. As a result, engineers will have to build transistors from components smaller than the atoms of hydrogen in our bodies. These new chips will be expensive, and the cost of building fabrication plants to produce them will be billions of dollars.

Despite all these benefits, the shrinking process won't be sustainable. While we can't shrink transistors forever, we can still make chips smaller. In 2006, we hit the limit of a single molecule, and now the limit of a chip's pathway is about three times as large as a molecule. And even at that point, electrons could slide off the path due to quantum tunneling.

However, the slowdown in hardware isn't the only problem with Moore's Law. The only thing that can prevent CPUs from getting better after we can't shrink transistors is a software issue. Even if silicon transistor technology continues to improve, software engineers will have a harder time keeping pace with it. This will create bottlenecks in the software that will hinder their productivity.

Another problem with Moore's Law and CPUs continuing their improvement is the cost of chip plants. The first generation of chip plants are extremely expensive, and the next generation of plants can produce more chips on a single silicon wafer. Then, the software needs to be rewritten to divide tasks among the cores. Ultimately, Moore's Law will continue to improve CPUs, but not necessarily make them smaller.

Another problem with Moore's Law and CPUs continuing their improvement is that the time it takes for a wire to process an instruction can exceed the actual clock time. The delay is significant enough to limit the frequency of a CPU. Nevertheless, with the advancement of technology in other fields, such as software, we can expect the speed of CPUs to increase dramatically.

Possible solutions

While the high-tech industry loves to talk about exponential growth and the digitally driven "end of scarcity," there's a limit to how small we can make chip components. The latest chips contain billions of transistors that are virtually invisible to the human eye. Once we hit this limit, engineers will have to build transistors with components smaller than an atom of hydrogen. Building fabrication plants to make new chips would cost billions of dollars.

While the first half of the 21st century saw computer speed increase due to shrinking transistors and increasing their density, the future of computing may depend on a whole new paradigm. Eventually, quantum physics and wireless communication may improve the speed of computing and even improve human health, safety, and productivity. But until then, there are some possible solutions. This article will discuss some of these.

The third generation of TSMC and Intel processors are still on the drawing board. These companies are experimenting with upward 3D transistors and different circuit board layouts. Intel has also considered using materials based on the elements of the periodic table. Elements from the third and fifth columns of the periodic table are more efficient conductors than silicon. Unfortunately, neither of these solutions is cost-effective or scalable yet.

Another solution to slowing the growth of silicon chips is increasing the speed of the switches in the chip. To simplify this, the speed of the switches is measured in hertz, or cycles per second. A gigahertz chip works two billion times faster than a megahertz microprocessor, and a million-hertz chip works a billion times faster than one. Think about the time it takes for an automobile to go through a toll booth - a two-gigahertz microprocessor would take just a few seconds less.

Another method of improving CPUs after we can't shrink the transistors is die shrinking. This process changes the manufacturing scale and allows chip makers to make smaller chips on a single wafer, which means the cost of the entire wafer is reduced. A smaller process also uses less material, reducing the amount of power used and generating heat. It also reduces the amount of cooling required.

Progress on shrinking transistors

The process of shrinking transistors in CPUs is a key component of the advancement of computing. The process has several challenges, which are related to Moore's Law. First of all, it requires transistor components to be smaller and work faster. In addition, transistors have to be less efficient as they become smaller and are less efficient at dissipating heat. Further, transistors also have problems with dynamic and static power.

While Moore's Law is the empirical law that governs the number of transistors on silicon chips, it may not continue forever. In fact, the size of transistors will no longer decrease after 2021, according to the latest International Technology Roadmap for Semiconductors (ITRS), published this month. The ITRS predicts what technologies will be needed to manufacture transistors of the next 15 years. Once transistors reach that size, they will no longer be economic to manufacture.

Another way to increase transistor density is by moving transistors closer together. As transistors become closer together, they will become smaller, increasing the number of devices on a single chip. Furthermore, shorter links between devices reduce latency, which will help improve performance. This process is continuing thanks to recent developments in nanotechnology. The development of nanowires and layer cakes of processor elements will further increase the density of transistors.

In the 1970s, the gate length of CMOS transistors was defined in terms of logic technology nodes. Then, the node numbers were used as a nomenclature of nodes. In the 1980s, transistors at this node were actually 70 nanometers long and used 130 nm of light as a node. The process continued along the Moore's Law path.

In 2006, the semiconductor industry began to see the economic benefits of moving towards smaller transistors. During that time, companies could pack more chips onto a single silicon wafer. However, recent nodes have been slower and more expensive, forcing companies to add additional steps and costs in order to get the chips packaged in a thinner and more compact package. As a result, the production costs of transistors are going up.

What is the Impact of Moore's Law on the Semiconductor Industry?

Moore's law has a major impact on the semiconductor industry, as it has predicted dramatic reductions in the cost of electronic instruments. It has also influenced chip design and manufacturing costs. The law was coined to define a new economy. In a nutshell, Moore's law defines the emergence of a new type of industry based on rapid change in information processing technologies.

Moore's law defines a completely new economy

The semiconductor industry has been affected by Moore's Law in many ways. In the long run, the technology that drives the industry will likely be exponentially more efficient and cheaper than it is today. It is a phenomenon that has pervaded much of the modern world, with some estimates placing the credit for more than 40% of global productivity increases on improvements in the performance and cost of semiconductors.

The semiconductor industry began as a series of incremental process innovations. The first major breakthrough occurred with the development of point contact transistors. By the mid-1950s, the industry was still in its infancy. The firms that developed transistors and other semiconductor devices were called chip makers, and the semiconductor equipment industry was born.

While Moore's law seems to be a good thing from a technological and economic perspective, it is also important to note that the semiconductor industry is facing a number of potential problems. Despite the positive effects of Moore's law, costs per transistor are rising at an alarming pace and capital equipment required to produce next-generation nodes are becoming increasingly expensive. Furthermore, if chip prices continue to rise at the current rate, the industry would be forced to invest huge sums of money in retooling and new fabs.

The development of new chip technologies is based on Moore's Law, a principle that has been used to drive innovation in the semiconductor industry for decades. This law is dependent on the overall economic situation and could have dramatic effects on chip makers. Moore's Law may also be affected by the changing expectations of consumers and the industry's users.

Moore's law continues to improve the performance and cost of semiconductors. As this happens, the semiconductor industry is expected to consolidate into oligopolies with only a handful of leading edge players. Some companies will become fabless, while others may opt out of the industry entirely. Ultimately, the leading semiconductor companies will be vertically integrated or develop in-house competence for critical production steps.

One of the greatest challenges in the semiconductor industry is the fact that the technology is extremely expensive to manufacture. As a result, chip plants must be built in order to produce more chips per silicon wafer. While it is possible to make a chip smaller than a single molecule, this won't be possible for long. As the technology improves, the size and cost of the chip will decrease.

It predicts that the cost of electronic instruments will fall greatly

Moore's law is a prediction about the rate at which technology improves. It has been used to guide semiconductor industry long-term planning and to set research and development goals. The law is considered a self-fulfilling prophecy and has been shown to lead to continual changes in the field of digital electronics, including processor prices, memory capacity, and sensor improvements. It has also been linked to productivity gains and economic growth.

Moore's law has been widely quoted but has been subject to controversy. Although it has been a widely held concept for years, it has been shown to be less accurate than many people thought. There have been some historical errors in the predictions, and some studies have pointed out data that contradict Moore's Law. While there are significant differences between Moore's law and reality, it is still easy to identify the two. Both predictions state that the cost of electronic instruments will fall greatly, but this is not always the case.

In 1970, when Moore wrote his article on integrated circuits, discrete transistors were still a hot commodity. Consumer electronics did not need expensive ICs, and ICs were mostly used for cost-conscious military applications. When Moore wrote his article, he was trying to promote the use of ICs, but later admitted that his prediction was not very confident.

Since the discovery of semiconductors, the cost of manufacturing electronic instruments has decreased dramatically. The underlying technology in semiconductors allows for higher integration and cost reduction, making our lives and businesses more comfortable. However, Moore's law did not anticipate the invention of the Internet, or even the invention of the microprocessor.

While it is true that Moore's Law will eventually lead to a collapse of the cost of electronic instruments, the exact time frame is still unknown. It was predicted to collapse in 2013, but Intel has repeatedly delayed the date. In fact, some academics think that the limits of Moore's law could be as much as 600 years from now.

According to Moore's law, the number of transistors in a dense integrated circuit doubles every two years. This is not a law of physics, but a prediction made from a historical trend.

It influences semiconductor design

Moore's Law has played a central role in the development of semiconductor technology. It has driven technological advancement, economic growth and productivity. Its underlying principle states that computing power and density will increase and the transistors on integrated circuits will become smaller and more efficient with time. The law is a useful metaphor for the advancement of technology, and is the foundation of semiconductor industry R&D targets.

The theory behind Moore's Law was first published in Electronics Magazine on April 19, 1965. The article featured a graph that outlined the minimum chip cost. The graph shows that if the average cost per chip triples every three years, the silicon area of a chip will double. However, that could bring chip costs up rather than down.

In the past, the semiconductor industry relied on traditional 2D "planar" transistor designs. However, as transistor sizes dropped below 28nm, leakage current increased. To solve this problem, the industry developed a 3D transistor. This new design consists of a gate and a channel, and has a higher density of transistors. It is called a FinFET because of its fin-like appearance. Intel was the first company to commercially produce a FinFET process. It first began using the technology at the 22nm process node.

The early 1950s saw rapid growth in research on solid-state technology. It led to the birth of a new industry. The Fairchild Eight, which are now known as Fairchildren, left Shockley Laboratories in 1957. The company has become one of the largest manufacturers of semiconductors in the world, and has spawned more than 150 spin-off companies.

The law of Moore is the basis of much of the advancement in semiconductor technology. It predicts a 21 percent annual decline in cost for leading-edge semiconductors. The pace of technological change depends on a complex set of technology sources that must be precisely coordinated. It may also be influenced by diffuse factors.

The semiconductor industry has expanded rapidly, particularly in the United States. In fact, the semiconductor industry in the United States has grown faster than the global economy.

It influences manufacturing costs

The semiconductor industry has seen significant profits increase, and Moore's Law has helped push that trend forward. The law predicts that the number of components per chip will quadruple every three years, meaning that the next generation chip will have four times as many components as the current chip. If this trend continues, the chip's price will likely increase instead of decreasing.

However, this trend cannot be sustained. As new technology nodes are introduced into the market, the price of leading-edge semiconductors will drop even faster. In fact, the pace of the declines may accelerate to 40 percent annually and beyond. That rate is much faster than what was previously the norm.

Despite the constant migration between process technologies, manufacturers don't always migrate their chips from one technology to the next. That means that the next-generation chip may be more advanced than the one before, and the old one may not be completely compatible. If this happens, the new chip must be re-qualified for use. The inconvenience and expense of re-qualification may lead customers to avoid buying the new version, opening up the socket to the competition. This means that the model based on Moore's Law may not be appropriate in some cases.

The law states that as the number of components per circuit increases, the unit cost per component decreases. The lower the cost per component, the greater the yield of good circuits per 100 circuits manufactured. This trend is referred to as the minima point. It is the number of components in a circuit that are able to be manufactured with the least cost.

These factors all have significant effects on semiconductor industry costs. In fact, the cost of semiconductor manufacturing has been rising faster than the growth of the global economy. Changing technology and the competitive landscape have all made manufacturing costs in this industry higher. This has resulted in a major need for semiconductor manufacturers to reinvent their roles and optimize their capabilities. However, the challenges remain. For the semiconductor industry to remain competitive, Moore's law alone will not suffice. Successful companies must be innovative, and change their business models to meet the changing needs of consumers.

Moore's law is a unique and influential law that describes the development of semiconductor electronics. This law, which was coined by Gordon E. Moore in 1964, predicts that the number of transistors on a chip will double every two years. This trend has made semiconductors an integral part of many electronics and systems. Consumers and users alike have come to expect better and faster products from their electronics. This phenomenon is expected to continue well into the future.

What Substrates are Involved with GAA Topologies?

In Gate-All-Around (GAA) topologies, several types of substrates can be used, each offering unique benefits for different applications. The choice of substrate is crucial for the overall performance and fabrication of GAA transistors. Here are some of the common substrates involved in GAA topologies:

-

Silicon (Si): Silicon remains the most widely used substrate in semiconductor manufacturing, including GAA transistors. It's cost-effective and well-understood, with an extensive infrastructure already in place for its processing. Silicon-based GAA transistors can be integrated with existing CMOS (Complementary Metal-Oxide-Semiconductor) technology, making them attractive for widespread adoption.

-

Silicon-on-Insulator (SOI): SOI substrates, where a thin layer of silicon is placed on an insulator like silicon dioxide, are also used in GAA transistor fabrication. SOI can reduce parasitic capacitance, leading to faster transistor switching and reduced power consumption. This makes SOI substrates particularly suitable for high-performance and low-power applications.

-

Germanium (Ge): Germanium substrates can be used in GAA transistors due to their higher electron and hole mobility compared to silicon. This can potentially lead to faster transistors. However, germanium is less common and more expensive than silicon, and its integration with standard CMOS technology is more challenging.

-

Compound Semiconductors: Materials like Gallium Arsenide (GaAs) and Indium Phosphide (InP) are considered for high-frequency applications. These materials offer higher electron mobility than silicon, making them suitable for radio-frequency (RF) and high-speed electronics. However, they are more expensive and complex to work with than silicon.

-

Nanowire and Nanosheet Substrates: For advanced GAA designs, especially those aiming for extreme miniaturization, substrates based on silicon nanowires or nanosheets are being explored. These nanostructures provide excellent electrostatic control of the channel and are highly scalable, though they present significant fabrication challenges.

-

2D Materials: Emerging research is exploring the use of two-dimensional materials like graphene, transition metal dichalcogenides (TMDCs), and black phosphorus as substrates or channel materials for GAA transistors. These materials could potentially offer superior electrical properties, though they are currently in the early stages of research and development.

Each substrate type brings its own set of advantages and challenges, influencing the performance, scalability, cost, and suitability for different applications. The choice of substrate is a key factor in the design and potential success of GAA topologies in semiconductor manufacturing.

industry that addresses technological developments beyond the scope of Moore's Law.

industry that addresses technological developments beyond the scope of Moore's Law. predicting the doubling of the number of transistors on a compute chips every two years. This theory was known by many scientists working in the field and it was subsequently adopted as Moore's Law. The law has led to dramatic advancements in computing and has helped push technology forward over many challenges. It is still valid today, but there are some potential limits to its use.

predicting the doubling of the number of transistors on a compute chips every two years. This theory was known by many scientists working in the field and it was subsequently adopted as Moore's Law. The law has led to dramatic advancements in computing and has helped push technology forward over many challenges. It is still valid today, but there are some potential limits to its use. transistors. First, it is important to note that 10nm and 7nm are marketing terms. These numbers refer to the density of transistors, which is a measure of how much stuff you can fit onto one chip. The smaller the transistors, the more stuff you can fit on one chip. Obviously, this results in more chips per wafer.

transistors. First, it is important to note that 10nm and 7nm are marketing terms. These numbers refer to the density of transistors, which is a measure of how much stuff you can fit onto one chip. The smaller the transistors, the more stuff you can fit on one chip. Obviously, this results in more chips per wafer.